Submit a Question to the TILLS Developers

Don't see your question here? Submit your question to the TILLS developers.

Which students should be assessed with TILLS?

TILLS is ideal for evaluating students between the ages of 6 and 18 with any of the following characteristics:- suspected of having a primary (specific) language impairment

- suspected of having a learning disability, reading disability, or dyslexia

- known to have an existing condition associated with difficulties in spoken and/or written language (such as deaf or hard of hearing, autism spectrum disorder, or intellectual disability)

- struggling with language, literacy, or social communication skills (who may be suspected of having social communication disorder)

How long does it take to administer?

Comprehensive assessment with all 15 subtests can typically be administered in 90 minutes or less. Less time is required to administer the identification core subtests (20–40 min).Does TILLS measure expressive & receptive language?

Yes, TILLS measures expressive and receptive language, but, no, it does not give you separate scores that are broken down in this way. Research has shown that there is no effective way to measure receptive and expressive language separately from each other. Any language assessment task requires at least some integration of language input and output, so to offer separate scores would be artificial. Consistent with this design, the DSM-5 no longer has a category for receptive and expressive disorders.Why should I test an older student when I already know they have a disability?

Simply knowing that a student has a disability doesn’t provide clinicians or educational teams with all the information they need to provide effective interventions. With TILLS, educators and clinicians will get a complete profile of a student’s oral and written language skills, allowing them to focus interventions on the area(s) with which the student is struggling. Older students who are transitioning out of high school may also need updated testing to document a disability for workplace or higher education accommodations under the Americans with Disabilities Act (ADA).What is the purpose of the TILLS Student Language Scale (SLS)?

The TILLS Student Language Scale (SLS) allows students, parents, and teachers to rate how well a student performs academic tasks compared to same-age peers and indicates areas to target for helping the student improve in school. The SLS is a simple one-page checklist that helps identify perceptions of students’ strengths and weaknesses in language and literacy skills that are observed directly with TILLS, as well as other non-language areas.It can be used with TILLS, with other tests, or on its own. The SLS can be completed before or after TILLS and provides another source of input on the students’ performance from multiple sources—a requirement of IDEA.

Can TILLS be used with children who have special needs? What modifications am I allowed to use and do you have any tips for testing students with special needs?

Students from three special populations were included in the TILLS standardization trials. These students were enrolled in the studies because they had been identified previously as having one of the following diagnoses: autism spectrum disorder, deaf or hard of hearing, or intellectual disability (also called cognitive impairment). TILLS can provide information about the language-literacy and memory skills of students with any of these diagnoses; however, it is inappropriate to administer TILLS to any student with intellectual disability or autism who is functioning below a developmental age of approximately 6 years. In addition, it is inappropriate to test deaf or hard-of-hearing students with TILLS unless they have cochlear implants or properly fitted hearing aids or other hearing devices and have been learning language primarily through auditory-oral pathways rather than through sign language.Modifications and tips for administering TILLS to students with special needs can be found in the TILLS Examiner’s Manual.

Does TILLS align with Common Core State Standards?

Yes, the TILLS subtests assess the abilities that are needed for meeting the Common Core State Standards (CCSS) and similar state-level K-12 standards. The case examples described in Chapter 4 of the TILLS Examiner’s Manual provide examples of how teams have related TILLS results to the CCSS. However, it is not intended for use in assessing student progress towards or compliance with particular Common Core or state standards.Is it recommended that you always give a student the entire assessment each time, or can you just give subtests that are areas of concern?

TILLS was developed so that you may administer single subtests or combinations of them as well as the entire test. We recommend administration of all TILLS subtests, however, in order to develop a comprehensive profile of a student’s relative strengths and weaknesses. You should be able to administer all 15 subtests to most students in one 70- to 90-minute session or two 45-minute sessions. If shorter sessions are required, all sessions should be completed within a time span of no more than 4 weeks.

For tracking change in a particular skill area, you may wish to administer only those subtests that relate to that skill area. Ten of the fifteen subtests that make up TILLS can be given as stand-alone measures. Another five must be given after another related subtest.

Do you have any type of screener or questionnaire to give to teachers or parents? Or some short test that I could perhaps do while doing formal assessments quickly to see if further literacy assessment is also warranted.

Included with the Test of Integrated Language & Literacy Skills is a “Student Language Scale” (SLS), which can be completed by parents, teachers, and students to show each party’s perspective on how the student is performing on academic tasks as compared to their same age peers. It’s a simple one-page checklist that helps identify students’ strengths and weaknesses in language and literacy skills and other non-language areas (though it’s not a pragmatics checklist). The SLS is to be used with TILLS, and is also recommended for use as a stand-alone tool to gather valuable information about a student, or for use with other assessments of student performance and potential.You could also start by giving the Identification Core subtests on the TILLS to decide whether it you should give the entire TILLS battery.

Is this an assessment device that can be used more than once a year (i.e., can it be used to measure progress for RTI programs?)

TILLS can be administered to the same student as frequently as two times per year (i.e., after a minimum of 6 months since the first administration). The TILLS is not appropriate for progress monitoring on a weekly or monthly basis. Curriculum-based measures are better suited for this purpose.I have a student who speaks another language. Is it okay for me to translate the items into the student’s language?

Translation of TILLS test items into another language is not permitted. Translating items into another language creates differences in item characteristics that can shift the relative difficulty of the items dramatically and can undermine their validity and reliability. It also makes comparisons to normative data inappropriate. TILLS was not designed to be used with students who do not speak English as their primary language.TILLS may be used with students who speak English natively and also speak or are learning a second language (sometimes called “simultaneous English language learners”). However, it has not been standardized for students who speak another language natively and are in the process of learning English as a second language. TILLS should not be used for making diagnostic decisions about the language status of such learners (sometimes called “sequential English language learners”).

Can any TILLS subtests be used to identify language disorders among students with lower socioeconomic status (SES)?

Ideally, one would hope that students from low SES families would NOT score lower as a group on any particular subtest. However, that is not realistic. It is impossible to test higher level language skills that are relevant to the curriculum without picking up differences among children that may vary with SES, but there are scientific ways to minimize bias in measurement. The best way to reduce bias is to evaluate items with different groups of participants during development of the test and to eliminate any items that are shown by statistical analysis to be unusually difficult for those in different groups (males v. females, whites v. other racial/ethnic groups, high SES v. low SES). We did this with the background testing of TILLS for all items on all subtests that could be analyzed in this way (explained in our technical manual).We also evaluated the results during standardization and found that mean scores for the final versions of the 15 subtests are within one standard point when comparing any of these groups. Although these were good findings, we were aware that these points could add up differently when calculating an identification core composite for diagnosing disorder. Therefore, we provide alternative cut scores in the technical manual that can be used for students at ages where SES could make a difference in decisions for the purpose of identifying disorder. Alternative scores are not necessary for purposes two and three because students are being compared to themselves when using TILLS for the purposes of profiling within-student patterns or tracking change over time.

Does TILLS incorporate a test of articulation as one of its subtests?

TILLS incorporates subtests of language phonology, but not articulation; however, you can observe articulation qualitatively as the student performs the spoken language TILLS tasks. There are several reasons that we decided not to include an articulation subtest on TILLS. Research shows that articulation skills alone are not related directly to academic language abilities needed for reading and writing, whereas phonological language skills are. In designing TILLS to be a comprehensive test of language and literacy skills, we wanted to control length and measure skills that were most related to academic language. We also wanted neuropsychologists, school psychologists, and others who are not speech-language pathologists and not typically trained to administer articulation tests to be able to administer TILLS. Therefore, we decided not to incorporate a traditional articulation test as a TILLS subtest.The most direct measures of phonological language skills in TILLS are Nonword Repetition and Phonemic Awareness. Nonword Repetition assesses the ability to perceive, remember, and reproduce speech sounds in sequence in the context of nonwords that have been formed to mirror word structure found in academic vocabulary. Therefore some real bound morphemes are included, such as –ing and –ology. Similarly, the Phonemic Awareness subtest assesses students’ abilities to repeat nonword stimuli accurately, but after removing the first speech sound (i.e., phoneme) and only the first speech sound. This enables observation of the student’s concept of a phoneme. Students’ responses on either of these subtests are not penalized for consistent articulatory sound substitutions, such as w/r, w/l, or th/s, or for regional or cultural pronunciation variants of vowels or consonants. Examiners, of course, may note errors of articulation under qualitative comments for these or any TILLS subtest that requires spoken language responses (including Vocabulary Awareness, Story Retelling, Delayed Story Retelling, and Social Communication).

Why is it important to consider phonological language abilities in a comprehensive assessment of language and literacy?

Students who demonstrate difficulties with phonological aspects of oral language at the sound/word level in Nonword Repetition, Nonword Spelling, and Nonword Reading. A key difference in these subtests is that the written language nonword tasks require the ability to relate letter sequences to phonological sequences, going from letters to sounds in the case of Nonword Reading and sounds to letters in the case of Nonword Spelling. When students exhibit similar problems when attempting to repeat, read, and spell nonword items, it may signal a need for intervention aimed at helping them become more aware of the phonological elements that are represented by alphabetic sequences and patterns in print.When students have strengths in reading compared to spoken repetition and spelling, as students do who are deaf or hard-of-hearing or who have marked oral language deficits, it may be helpful to teach them to draw on their stronger abilities to perceive and remember print to help them perceive and remember speech sounds in words. Conversely, if students have strengths in the oral modality compared to processing orthographic patterns in print, as students with dyslexia do, clinicians might emphasize speech sound awareness to learn associations with print in reading decoding or spelling unfamiliar words.

If deficits are found within the literacy domain only, would you recommend Reading Specialists to dive deeper? If the Reading Specialist is involved with the assessment process and their findings do not coincide with our findings on TILLS, what do you suggest?

To begin, the mention of “our findings on TILLS” suggests that this question may have come from a particular professional, perhaps a speech-language pathologist (SLP). Although the authors of TILLS are SLPs, we designed TILLS to be used by members of multiple professions, not just by SLPs. This includes reading specialists as well as school psychologists and neuropsychologists. Several reading specialists served as test administrators during standardization of TILLS. One of the authors of the Student Language Scale has a masters’ degree in social work.No matter who administers TILLS, collaboration among team members with diverse expertise can lead to richer interpretation of the results. An important reason to use a comprehensive measure is that teams can compare results directly across subtests that were co-normed on the same sample, as was done for TILLS. Attempting to compare results across different tests, with different normative samples can often lead to invalid conclusions about relative strengths and weaknesses. This is because there is no guarantee that the children comprising the norms for one test would have scored equivalently on another. If different tests have been used, teams can consider standard scores relative to each test, rather than across tests.

The important feature of TILLS that is not found in any other test is the ability to compare sound/word and sentence/discourse level abilities in oral and written modalities with co-normed subtests. This makes it possible for TILLS results to give you a good idea of why the student is struggling with reading—if it’s at the word structure level or the discourse level. It is hard to imagine what findings from other assessments would dispute that. If there were discrepancies, that’s not necessarily a problem but rather a point for further discussion. Who works with the student is best determined by the team, but ideally would be a collaborative effort of the reading specialist, general education teacher, and speech language pathologist to work together on the skills (such as, phonemic awareness, morphology, orthography) and the content types (expository, narratives) that are required for academic success. When working together, team members with different professional backgrounds can promote coordinated service delivery that is synergistic and most efficient for addressing a student’s needs.

In the end, teams function best when they can agree on primary goals. Whether or not deficits are found within the literacy domain only, it is helpful for reading specialists to be involved in interpreting results and deciding what to do next.

When assessing children suspected of having a language disability, would you use the TILLS plus another general language measure or will TILLS assess all that I need to know for a suspected language disability? My director will now be attending all evaluation PPTs because of concerns about staff doing comprehensive evaluations to avoid independent (outside) evals. This has not been a concern with the SLP department, but there is a high level of anxiety in my district.

I think you will find that TILLS alone can meet your diagnostic needs in a manner that will be satisfying to parents and therefore, may be less likely to trigger controversy and subsequent independent evaluations or hearings. You can always give other tests too if you want to follow up on a particular hypothesis, but the TILLS is validated to identify language and literacy disorders using the Identification Core subtests (3-5 depending on the student’s age) and scientific evidence is provided showing acceptable sensitivity and specificity at each age level. Additional measures are not needed to give a comprehensive picture of the student’s language and literacy abilities.Parents can also get a say in the assessment process if you use the Student Language Scale. As you know, IDEA and best practice both dictate that multiple sources of input (not specifically multiple tests) be used when finding students eligible for special education. In your TILLS kit, you will receive a tablet of Student Language Scale forms that can be completed by a parent, a teacher, and the student in question. Each participant rates language and literacy skills that are assessed with TILLS, and each is also asked to identify something that would be important to target to help the student do better at school. You can look for agreement among these sources, and you can have productive and positive discussions at IEP meetings about points where the ratings might be the same or different. Different ratings do not necessarily indicate that one person is right and another wrong. They just have different experiences with the student in different contexts. Parents in our focus groups liked being asked to play an active role in the assessment process.

Can TILLS be used for the purpose of progress monitoring?

TILLS may be used to detect changes in performance compared to same-age peers at intervals of 6 months or longer. TILLS is not appropriate, however, for progress monitoring on a weekly or monthly basis. Curriculum-based measures are better suited for this purpose.Can TILLS items be used for intervention?

The answer to this question is an unqualified “no.” The format and materials of testing should always be independent of the format and materials used for intervention. Using test items, test format, or test materials outside of the formal testing context is strictly forbidden. This invalidates the test for future use, and it is a violation of examiner ethics (Anastasi, 1992). Scores on the TILLS subtests are designed to point to skill areas to address in intervention, not to provide the specific methods, items, or materials for intervention.How can I use the TILLS results to inform instruction and intervention?

In Chapter 4 of the TILLS Examiner’s Manual, we provide case studies that illustrate the progression from TILLS test scores to curricular considerations. Note that the TILLS subtests are curriculum relevant in that they reflect the language demands of the curriculum. To be curriculum based, assessment must be performed using the student’s actual curricular materials and applying informal assessment methods, such as targeted probes (e.g., oral or written language samples, read-aloud samples, inventories of graphophonemic associations) and dynamic assessment procedures (i.e., involving a sequence of test-teach-retest).You also should be aware that subtest scores may reflect multiple areas of functioning and that treatment goals should not be based on single subtest scores. In the case of reading comprehension, for example, a student’s individualized plan might need to include goals for strengthening reading decoding skills as well as for improving vocabulary knowledge and syntactic skills to aid in comprehension. Such decisions are based on the overall performance profile of the individual student and should be informed by concerns about the student’s needs in relationship to academic demands of the curriculum (see examples in Chapter 4 of the TILLS Examiner’s Manual).

I can see that the TILLS includes both phonemic awareness and phonological memory subtests. I am curious why you chose not to include a RAN subtest.

Our rationale was to include the tasks that fit our model of curriculum-relevance best for the two language levels (sound/word and sentence/discourse) by four modalities (listening, speaking, reading, and writing) and that would have most implications for what to do next. Although it would be helpful to identify students with slow retrieval, we reasoned that people could use other tools to pursue hypotheses about factors such as RAN difficulties in followup testing. That information would be of interest diagnostically but might have fewer direct implications for intervention choices than other pieces of information we wanted to gather.In private practice, what diagnostic label and code would you use for the lower left quadrant (lang/lit disorder)? Would you use two codes: rec/exp disorder code AND reading disorder code? It's not viewed as one disorder in ICD-10, right?

I typically advise SLPs who diagnose language and literacy deficits to frame the reading/writing problems as language deficits, using F80.2 (mixed receptive-expressive language disorder), assuming these are children without a medical event that impaired already established reading and writing skills, such as a TBI. (For those children with TBI, you would assign, perhaps, R48.8 [other symbolic dysfunction], which describes organic-based language deficits, and includes agraphia. Such patients could also be assigned R48.0 [dyslexia], when reading is disrupted by a TBI, for example).For children without medically-related impairment, who demonstrate language and literacy issues, you could also assign, in addition to F80.2, reading and writing deficit codes from the F81 series (F81.0, reading disorder and F81.81, disorder of written expression). However, health plans do not cover literacy deficits, and adding the reading and writing disorder codes only highlights deficits health plans will not cover. Likely, the health plan may not cover F80.2, as plans often don’t cover “developmental” or functional impairments. Technically, you want to code all deficits, so the clinician could assign all relevant codes, but I believe it would be okay to list only the language deficit code.

Janet McCarty, M.Ed.

Director of Private Health Plan Reimbursement

American Speech-Language-Hearing Association

Government Relations and Public Policy

(7/20/2016)

What CPT code do you use to bill for this testing?

PT 92523 describes speech and language evaluation, and would include assessment of reading and writing.Janet McCarty, M.Ed.

Director of Private Health Plan Reimbursement

American Speech-Language-Hearing Association

Government Relations and Public Policy

(7/20/2016)

Do you have to administer all 15 the subtests?

TILLS was developed so that you may administer single subtests or combinations of them as well as the entire test. We recommend administration of all TILLS subtests, however, in order to develop a comprehensive profile of a student’s relative strengths and weaknesses. For tracking change in a particular skill area, you may wish to administer only those subtests that relate to skill areas of particular interest. The danger would be, however, overlooking areas that might be of concern because they are falling further behind. It’s important to know that TILLS was designed and standardized for three purposes:- To identify language and literacy disorders

- To document patterns of relative strengths and weaknesses

- To track changes in language and literacy skills over time

If you wanted to administer TILLS for purpose 1, you would only need to administer the subtests that effectively identify language and literacy disorders in children your student’s age. Even though you would have high likelihood of identifying a disorder if present, you would not have a complete profile of the student’s abilities if you only administered the Identification Core subtests for the student’s age.

If you wanted to administer TILLS for purposes 2, we recommend that you administer all 15 subtests so you have a comprehensive profile of your student’s strength and weaknesses. If you wanted to administer TILLS for purpose 3, we recommend that you administer all 15 subtests on initial testing and then the subtests that represent areas you wish to track on follow-up. Alternatively, if you were concerned solely about certain skills, you could administer a subset of the tests on initial and follow up test sessions. In any case the same subtests should be administered no less than 6 months apart.

Please note, some subtests may not be administered alone but must be administered as part of a pair. Explicit instructions on test administration can be found in the Examiner’s Manual.

If I am only interested in finding out if my student has a language or literacy disorder, can I just administer the identification core subtests for their age?

Yes, you can. Instructions for which tests to administer can be found in the Examiner’s Manual.Who can administer TILLS?

TILLS can be administered by any professional with preparation for working with children and adolescents with disabilities who has received training about how to administer and score individualized standardized assessments. This includes speech-language pathologists, special educators, reading specialists, learning disability specialists, neuropsychologists, and educational psychologists.A 90 minute administration time sounds challenging for some children who may have difficulty focusing and attending is there time allowed for breaks when administering this test? Could it be administered in separate parts?

TILLS is designed to work with your schedule. You can administer all 15 subtests, or choose the ones most relevant to individual students. You can do them in one 70–90 minute session, two one-hour sessions, or even two 45-minute sessions. You can even administer subtests in multiple sessions within a 4-week period.Can bilingual students be assessed with TILLS?

Yes, but only if the student has learned English from birth.If a child can be diagnosed as having a speech-language impairment based on this assessment, and other than SLPs are able to administer this tool, does that mean that other professionals will be able to diagnose a speech-language impairment?

Diagnosis and treatment of language/literacy disorder, which may be known alternatively as language impairment, learning disability, or reading disorder, is within the scope of practice for a number of professions. Therefore, it is already the case that multiple professions are diagnosing language-based disorders. For example, a special educator or psychologist might diagnose a language-based learning disability or reading disorders, even though the underlying deficit for both of these diagnostic labels involves deficits in language skills. There is less professional cross-over between professions in the diagnosis of speech disorders, which is not addressed by the TILLS. The issue of whether other professionals can use the TILLS to assist with diagnosis is whether the tester has had professional training in individual test administration and is familiar with the conditions the tills is intended to diagnose. Familiarity with language and literacy skills facilitates consideration of other factors that might reinforce or undermine the diagnosis indicated by the TILLS, when a full picture of the child’s functioning is take into account.Second, speech-language impairment is a school-based eligibility category under U.S. Federal law (IDEA), governing special education service delivery in schools. It is not a diagnosis per se. For example, children with diagnoses of apraxia, phonological disorders dyslexia, and specific language can be eligible for special education services under the speech-language impairment category of the idea. The TILLS is validated to identify language/literacy disorder. However, children with a language/literacy disorder might be best served under speech-language impairment or under specific learning disability categories of the IDEA. Typically, categorization under speech-language impairment is generally viewed as indicating need for the services of a speech-language pathologist.

When a student shows a profile consistent with the dyslexia profile on TILLS, do you then give any more in-depth testing? For example, if they score low on your PA subtest, I would probably give a phonological awareness test to look more specifically at skills within this area to be more prescriptive and develop a thorough idea of what the kid needs, but I worry that I am just wasting my time and the time of the student too. I think it’s fine to use other formal tests to pin down aspects of a student’s ability, but I would be more likely to zero in on areas of weakness and use informal inventories (than to use additional formal tests) in order to have baseline data and figure out where to get the most results the quickest in intervention. Take phonological awareness. It’s a very teachable/learnable skill, even for a student with dyslexia. If it is low, I would probably target it in the context for probing for knowledge of phonics (because research shows that is more efficient for older kids who have not yet grasped PA to work with PA and letters, i.e., phonics, to make it more concrete). So I might use some letter chips and have the student first point to the tile that represents the first sound, then the last sound in a word like “top.” I would use dynamic assessment for this (test – teach – retest). If the student could do that task, I would up the ante immediately, perhaps by using some consonant clusters. If not, or whenever I hit a level that seems to be at the edge of the student’s competence, I would have the student imitate the word slowly first and “feel” the first sound—maybe point out articulatory feature. If that is too hard, I would know I had to go back to a more basic level of teaching sound-symbol associations. If that were the case, I would set out arrays of 5-6 letter tiles and swap them out to probe through all the letters, and see which are automatically associated with phonemes and which not—you don’t need a formal test for this, and formal tests are designed to sample knowledge, not to inventory a full category. Have the student point to the one that says /t/, /m/, /sh/ etc. I’d focus on consonants first, because vowels can be pronounced differently depending on orthographic context, so -o- indicates “ah” as in “hot” but /o_e/ represents /ou/ as in “hope”. If the consonants are solid, you will probably have to do a little instructing on vowels regardless.

If the student has a solid backwards and forwards grasp of sound-symbol associations (I say sound and child points to corresponding letter; or I point to letter and child produces the sound that letter usually makes; or I say sound and child writes letter) and can form simple CVC words and take them apart (phonological synthesis and analysis), I might then do more assessment of reading in context to try to figure out whether the student is applying this knowledge of phonics when reading. Then I might probe knowledge of morphemes. You can get a glimpse into this using the TILLS nonword performance because those 3 subtests use pseudowords and you can see if the student is treating any of the real morphemes like “chunks”. Then use the student’s curricular materials to begin to point out some of these regularities in context, such as -ing and -ed endings on verbs. I really think the Spell-links materials provide a nice structured way to teach about word-structure knowledge. Of course, lots of kids with symptoms of dyslexia have other language weaknesses as well, so don’t ignore those. So, to answer your original question, although I don’t think you’re wasting anyone’s time with additional formal testing, I think moving more directly into intervention and gathering new assessment data informally as you go is a more efficient way to help the student sooner.

Why are some subtests not administered from ages 6;0 through 6;5?

We eliminated some subtests at this age because our data indicated that many typically developing students had not yet developed the skills required by the subtests. This was true for subtests that measure skills we would expect to be emerging in early first grade (i.e., subtests measuring reading and writing). When typically developing students pass few items, it is difficult or impossible to differentiate impaired performance by children with language and literacy disorders from typically developing performance.The following 5 subtests should not be administered to students who are between the ages of 6;0 and 6;5:

- Subtest 5 – Nonword Spelling

- Subtest 7 – Reading Comprehension

- Subtest 10 – Nonword Reading

- Subtest 11 – Reading Fluency

- Subtest 12 – Written Expression

Can a subtest be used individually as its own test or do you have to give all of the subtests in a composite?

Yes, a subtest or component test of TILLS can stand on its own with the exception of those subtests that must have a different subtest given immediately prior e.g. Listening Comprehension must be given before Reading Comprehension even if you only want to use the results for Reading Comprehension. Page 92 of the TILLS Examiner's Manual provides information related to this issue.Is there any research/explanation as to how/why the test has decided which subtests to use to calculate the "Identification Core" score? I have assessed 2 students: one student had more lower scores overall than the other student. The "lower student" exceeded the "cut score" whereas the student who had some significantly higher scores did not meet the "cut score". Therefore the student who received more average scores overall ended up being a student who the test would say is "consistent with the presence of a language/literacy disorder", whereas the student who had more below average scores did not meet this criterion (by virtue of which subtests were chosen).

The specific subtests for the age-group identification cores were selected to correctly identify the highest percentages of students in each age group who are known to have the disorder (sensitivity) while avoiding over-identifying (specificity) [see discussion about this in separate strand]. However, due to intervention or unusually strong scores on an included subtest, or a less common profile, situations like the one Cindy describes are possible where a student scores low on some subtests but not low enough to reach the cut score on the ones that are part of the Identification Core. We have allowed for flexibility of interpretation in alternative language included in the newly available TILLS report writing templates. Age group specific templates can be downloaded for free at https://tillstest.com/tills-report-writing-templates-2/Can you compare the results of the subtests?

Yes. All of the TILLS subtests were normed on the same population of students, so you can compare the results from different subtests and know that the results are psychometrically sound.If the TILLS percentile scores are most indicative of a child's true performance, why is the profile chart on the back of the protocol based on standard scores?

Remember that TILLS percentiles are based on actual percentile ranks rather than Normal Curve Equivalents (NCE). We chose not to use NCEs because they provide exactly the same information as standard scores (SS), thus adding nothing new. SSs are calculated using standard deviations (as are z-scores), thus giving them equal interval properties. NCE percentiles, similarly, are calculated to match the SSs [see quotation below], whether or not a given subtest has a normal curve distribution at all ages. Percentile ranks (https://en.wikipedia.org/wiki/Percentile_rank), on the other hand, are not on an equal interval scale, which means that percentile ranks are not directly comparable across subtests. Percentile ranks, however, provide additional rich information about the percentage of students in the norm reference group who earned a raw score lower than the student in question.

According to Wikipedia, "In educational statistics, a normal curve equivalent (NCE), developed for the United States Department of Education by the RMC Research Corporation,[1] is a way of standardizing scores received on a test into a 0-100 scale similar to a percentile-rank, but preserving the valuable equal-interval properties of a z-score."

Why are only a few subtest scores used to calculate the Identification Core Scores? Why not the scores from all 15 subtests?

Language profiles can change over time due to maturation, age expectations for oral and written language performance, language intervention, or special education. As a student’s behavioral profile changes with age, the particular subtests most relevant to diagnosis can be expected to change as well. Therefore, we identified the subset of TILLS subtests that most effectively identifies the presence of a language disorder, regardless of modality (oral or written), at different age ranges.We determined the optimal set of subtests for each age band through a multistage procedure. First, we graphically displayed the performance for children in the normative sample and students previously diagnosed with language and literacy disorders on all TILLS subtests to determine age groups in which the subtest standard scores for children with language and literacy disorders were most similar. This step yielded the three age clusters: 6-7, 8-11, and 12-18. Second, we identified those subtests showing a mean difference of at least 1 standard deviation between students with and without language/literacy disorders at each of the three age bands. We conducted initial exploratory discriminant analyses using those subtests to determine their accuracy in differentiating the students with and without language and literacy disorders. We conducted additional analyses to determine whether group differentiation could be improved by combining subtest scores. This procedure started with scores from the best performing subtests and systematically added subtest scores until no additional improvement in group differentiation could be obtained. We then repeated the analyses at each normative age group within the age bands. Finally, we identified a common cut score that maximized sensitivity and specificity at each age.

What is the “True Change Interval” in the Tracking Chart and how was it determined?

The True Change Interval is used to track change over time. The True Change Interval for each subtest indicates the number of standard score points that a child’s score on follow-up testing must differ from the initial score for the examiner to be reasonably confident that real change in skill level has occurred.Can I use TILLS to track change under most circumstances?

You can use TILLS to track change under most circumstances. HOWEVER, clinicians should be cautioned that there are circumstances that arise when children cross from one age group to another where it may look like there is no change when there really is. However, this potential exception to interpreting change doesn’t mean that it is never possible to document change.Let’s take a couple hypothetical cases:

Billy is tested at age 8 and gets a raw score of “X”, which translates to a standard score of 7. He is retested while he is still 8 and his new raw score is “X+4”. This translates to a standard score of 9. This is beyond the true change interval, and so the score is interpreted as showing a performance gain.

Jeremy is also tested at age 8. He gets a raw score of “X”, which translates to a standard score of 7. At age 8, he would need a raw score of “X+4” to get a standard score of 9, which is greater than the true change interval for his age. By the time he is retested, however, he has turned 9. Now he gets a raw score of “X+4”. However, at age 9, “X+4” is a standard score of 7 (because you need more items correct for each standard score at older ages). In this case, the true change interval doesn’t indicate that a true change has occurred. Did Jeremy not change by getting 4 additional raw score points? He probably did. But the higher raw score expectation at age 9 compared to age 8 wiped out the gain he would have gotten if the age norms for performance hadn’t changed. Thus, statistically Jeremy appears not to have changed when actually made the age-related change needed to maintain his standard score. This phenomenon is an artifact of how scoring works at age boundaries on any developmental test, not just TILLS.

Take another example. Cynthia gets tested at age 8 and gets a raw score of “X” for a standard score of 7. She needs a raw score of “X+3” to show true change. She is tested at age 8 and gets a raw score of X+8. At age 9, X+3 is a standard score of 7, but X+8 is a standard score of 9, which exceeds the true change interval, even though she has moved into a new age group. There is no problem documenting change for Cynthia because she exceeds the true change interval in spite of having advanced in age.

As these examples show, the clinician doesn’t lose the ability to document true change for Billy and Cynthia just because in cases like Jeremy, performance expectations are also changing with age and may mask true change.

Are all the subtests normed for ages 6–18 years, including the non-word reading sub-test?

The nonword reading subtest is normed from age 6 years, 6 months to 18 years, 11 months. Prior to six and a half years of age, many children do not read well enough to do the task.I have administered all 15 subtests and am ready to complete the SUMMARY AND INTERPREATATION page of the Examiner Record Form. After entering the scores for the Profile Chart and Confidence Intervals, where can I find the correct information for completing the third box, which asks for the TILLS TOTAL, COMPOSITE, AND IDENTIFICATION CORE SCORES?

The necessary data can be found in the tables on pp. 190-208 Examiner’s Manual, which present the standard scores and the confidence intervals (at the bottom of each table) for the TILLS total and composite scores by age levels.Thank you for this information. I have another question regarding gaining composite scores for a child who falls in the 6;0-6;5 range (where the written subtests are unable to be administered).

When looking at the Sound/Word Composite Score – is this able to be scored for a child in this young age category when the only subtests administered for that composite are the PA and NWRep? The rest of the boxes in that column are left blank. However, these composite scores ARE provided for this age group in the Appendix. According to the instructions on p. 97 it states to “calculate the sum of the subtests standard scores for each relevant TILLS composite (and for which all subtests were administered).” So… am I able to obtain the composite with only the PA and NWRep subtests given for this young age group?

The composite scores are based on all the set subtests that can be given at that age. You must give all the age-appropriate subtests in order to calculate the composites. So, if a subtest is administered at an older age, but not at 6;0-6;5, you can still get most composite score and a Total TILLS score based on the scores for subtests that were appropriate for that age. There is no Written Composite at age 6;0 to 6;5 because none of the subtests requiring a written response are given at that age.

Subtest Standard Scores: 6;6 to 6;11 --- Under written Expressions - Discourse (WE-Disc) my client scored a raw score of 37.5. This is not listed in the chart as several numbers are skipped. How do I account for this and report the score?

A raw score of 5 divided by 16 = .3125 = rounds to 31 (top score possible) A raw score of 6 divided by 16 = .375 = rounds to 38 (bottom of next range) Even though there is a gap, there is not possible score between 5 and 6.

You just need to round the score to 38.

Regarding the percentile rankings: This test's percentiles fall differently than other tests that I am familiar with. For example, a SS of 10 is typically the 50th percentile, 8 - 25th%, 12 - 75th %, etc. I do recognize that the TILLS uses Raw scores to calculate the percentiles, but it does not align with the SS that I am used to. Is this correct? Just making sure that I am scoring and reporting accurately.

Percentiles that are translated directly from scaled scores (rather than raw scores) are called NCE (Normal Curve Equivalent) percentiles. These percentiles have been adjusted to reflect what the percentile rank would be if the underlying normative distribution was a perfect bell curve. For language tests, the underlying distribution is often not a perfect bell curve. This is not just about the TILLS, it is true of most if not all language tests. For example, most normal children have acquired the bulk of grammatical morphemes at age 4. A test that examines morphology after this age will produce very skewed distributions. Likewise, young children have very modest working memory skills, leading to increasingly skewed distributions as ages get younger. The more the distribution skews, the less meaningful NCE percentiles are in describing the child’s performance, because they mask the nature of the underlying distribution characteristics. Rather than ignore this, as many tests do, the TILLS presents percentile ranks, which is the number of children who score below the child’s raw score. This is what many clinicians actually think they are conveying when the report the percentile, but it often is not.If the normal range is 10 for the subtest and the standard deviation is 3, does that mean that 7 is considered low average or below average?

Divisions of the normal score range into severity levels is always somewhat arbitrary. Many consider scores below 1 SD to be below normal, but the fact is, around 16% normal children can be expected to earn these scores. So other people may still consider a score of 7 (or even less) to be within normal limits. Both points of view can be justified since severity divisions are semi-arbitrary. For this reason, the TILLS does not provide severity ratings to go with different scores. Clinicians are free to make qualitative interpretations of test scores, as long as they can be justified. The data-based justifications for each of the TILLS main purposes can be found in the Technical manual.Regarding the written expression subtest, when calculating the T unit score, are subordinating clauses always considered dependent clauses?

Yes; subordinate clauses are always considered dependent clauses.I'm supervising students giving TILLS and have some questions about degree of specificity needed on VA. For example—is bark and growl both sounds without reference to sound a dog makes, or mean-average—both mean average given credit. How does lack of specificity in language score when the answer is conceptually correct?

I understand your concern. We would give credit for both of these answers, but for future reference, you might teach your students that minimal probing would be allowed to confirm the child has the common meaning in mind. Say something neutral, such as repeating the answer, and asking “‘They’re both sounds,’ what do you mean?” or “‘They both mean average,’ what do you mean by that?” or “Can you tell me what they mean?” The criterion for our giving credit is that the examinee did specify a semantic relationship in both cases that was not in common with the third choice. We do see similar issues with “right and correct” and “Pyramid and triangle” in that students tend to use one of the terms in their definition and you have to probe a bit more. You can always make qualitative comments about response patterns. Some students show word finding issues when responding to VA items and I would make note of that, but allow credit for good circumlocutions if the student still conveys the semantic relationship. Hope this helps.I have a student who is 13 years 8 months. She received a raw score of 127 on RF, which corresponds to a standard score of nine and a percentile rank of 16. It does not seem correct that a standard score of 9 should correlate with the 16th percentile. Please explain.

There are two types of percentile scores, and standardized tests usually provide one or the other. There is an important difference between the two.The first is the percentile rank. This is the percentage of test takers in the normative group (at the same age as your student) who scored below the score obtained by your student. This is what most clinicians think they are reporting when the report a percentile score. This may or may not be the case, depending on which percentile the test uses. This is the percentile the TILLS reports.

It explains why the same standard score could be associated with two very different percentile ranks on two different TILLS subtests. This could be confusing to some people (which is why it might be better to report standard scores), but it is more meaningful because it is based on actual empirical data.

The other is NCE percentiles (NCE stands for Normal Curve Equivalent). In this case, the percentiles are linked directly to the standard score and reflects the percent of the curve that falls below that standard score based on a theoretical bell curve, no matter what the test’s empirical data actually show. In this case, a standard score of 100 (for a composite score) or 10 (for a subtest score) will always have a NCE percentile rank of 50%, 85 composites or 7 subtest scores will always have an NCE percentile rank of 16. 115 composite scores and 13 subtest scores will always have a percentile score of 84, etc. This relation is so consistent that it is one way to tell if your test uses percentile ranks or NCE percentiles. Percentile ranks will follow the actual scores of children in the normative sample, and the NCE percentiles will line up with the standard scores.

NCE percentiles assume that the scores of the normative group at each age represents a bell-shaped or “normal” curve. However, this is often not the case for language tests. For example, normal development of morphosyntax is almost complete at age 4, making the population distribution very non-normal around this age and afterwards. Likewise, when subtests or tests test a very large range of ages, the normative distributions tend to skew at the upper-most and lower-most ages. In other words, having a normative referenced test does not mean that you have a normal distribution of scores in the normative group. These are actually independent ideas, with unfortunately similar names. This is not a problem specific to the TILLS, but general to most child language tests, if not all. When the normative sample does not have a normal distribution, then NCE percentiles may not convey what the clinician intends because the percent of scores lower than the child’s score (the percentile rank) can be quite different than the NCE percentile (the normal distribution equivalent). Under these conditions, the percentile rank, rather than the NCE percentile, more accurately positions your student’s score within the TILLS normative distribution.

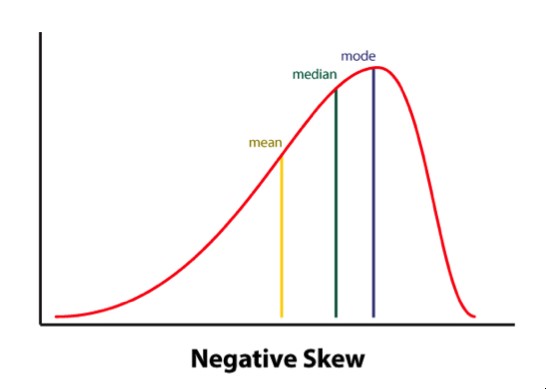

Looking closer at the Reading Fluency subtest on the TILLS, for example, it has a negatively skewed distribution. In other words, a large number of typically developing students do extremely well on this subtest and a much smaller number of students do quite poorly. Therefore, the mean is to the left of the mode (see illustration of a negatively skewed distribution). This explains why your student could earn a standard score of 9, which is near the mean, and yet score lower than 84% of the sample when true percentiles are used rather than NCE percentiles.

How is it possible to get a standard score of 0/percentile rank of 0?

A person cannot score above the 99.9th percentile because that would mean scoring higher than oneself; however, a person could earn a standard score of 0 and that score could be higher than 0% of others in the sample. If you got a standard score of 0 and a %ile of 0, it means that at that score, nobody at that age scored lower. It might be that there were lots of people AT 0, but nobody lower.Please advise on how I should score the TILLS Written Expression subtest when the child adds a lot of unnecessary conjunctions —(added and a lot.)

The overuse of the conjunction “and” (and sometimes “but” or “so”) is not that uncommon in young writers. You always count the conjunction in the total word count. What comes after the “and” is what is important for counting T-units which will yield a score indicating grammatical complexity. If the student is simply combining independent clauses with an “and” then each independent clause (with any subordinating clauses) will be a separate T-unit and the Written Expression Sentence Score will be lower. Section IIIA in the Examiner Practice Workbook that comes in each TILLS kit has wonderful examples and tips for scoring this subtest. In particular p.91, example sentence 1 shows a child who in essence is writing what we would think of as a run-on sentence by overusing conjunctions to connect independent clauses.When giving the written expression subtest, I find that even after talking about what is expected and giving the example, a lot of children still copy word for word what has been written. How do you score this if they have copied exactly what was on the page?

According to the Written Expression allowable probes (p. 66 of TILLS Examiner's Manual), it is appropriate to remind a student that their job is NOT to copy, but to try to put the story together in a way that is less choppy and maybe more interesting. However, it is not uncommon for first and some second graders and also our students with language difficulties to continue to copy. This is okay. You let them copy. There is still a lot to be gained by looking at their response. We know not all students can copy accurately and will misspell words even when they are allowed to look at them. The norms will still be valid but may require your clinical judgement for a more nuanced interpretation. For example, a student who accurately copies all of the sentences will, almost by default, get a perfect discourse score--so this may be inflated. Whereas copying nearly always yields a sentence score of 1.0 which for most ages will be below the mean. The word score could vary depending upon how well they copy. We realize the frustration that can occur because it may not feel like an accurate representation of the student's writing ability. It is challenging to evaluate writing in a standardized fashion. It certainly would be wise to gather more information from the classroom teacher (perhaps using the SLS--Student Language Scale Screener) and doing some further curriculum-based assessment such as having the child write an original story, or looking at written classroom exercises which could aid in creating a well-rounded picture of the student's written language abilities.What is the diversity of the sample?

The TILLS sampling plan called for sampling students by sex, race, and ethnicity as close as possible to their prevalence in the U.S. population (within the restrictions on inclusion discussed in the Technical Manual). The Table below reports the racial and ethnic background of the normative sample relative to the census data.| TILLS normative sample | U.S. population | |

|---|---|---|

| White (non-Hispanic) | 73% | 64% |

| Hispanic (any race) | 10%* | 16% |

| African American | 10% | 12% |

| Asian | 5% | 4% |

| Native American | 1% | 1% |

| Other | 1% | 3% |

*The proportion of Hispanic students is lower because students not learning English since birth were excluded from the normative sample.

Why are the norms for students ages 6 & 7 years given in 6-month increments?

We examined the normative data during the course of data collection and determined that the youngest students’ scores showed noteworthy developmental changes across 6-month intervals at these ages. For example, 6-year-old students who were younger than 6 years, 6 months of age tended to have lower raw scores on certain subtests than students who were 6 years, 6 months of age or older. Therefore, separate norms were developed for students at 6-month intervals to better reflect developmental changes from 6 through 7 years of age. Why were students ages 14–18 years grouped together in the norms? During test development, analysis of the data indicated that test scores were not statistically distinguishable by age for students between the ages of 14 and 18 years. Therefore, these ages were collapsed into a single normative group. It is important to note, however, that this similarity in the performance of the normative sample did not reduce the power of the test to detect disorders for students this age, which remained strong).How did the developers control for test bias?

The TILLS developers controlled for test bias in two ways. In the earliest stages of the TILLS development, an external panel that included scientific experts, practicing clinicians, and parents of children with disabilities reviewed the pool of potential subtests and test items. These individuals represented different geographical regions within the United States, and a subset had particular expertise with minority and multilingual children. We modified or removed from the item pool any items the panel thought reflected local dialect variants or regionally specific vocabulary. In addition, the expert panel reviewed the overall test format, procedures, and materials as a potential source of test bias. The developers also used statistical methods to evaluate sources of potential bias. These methods can reveal bias when one group of test takers shows a systematic difference in performance from comparison groups. Using these methods, we assessed potential bias for TILLS at the item level and at the subtest level.Were bilingual (Spanish and English speakers) part of the normative sample? If so, what was the percentage of this population in comparison to the rest of the sample?

TILLS is intended to be used with students who are native speakers of English. Therefore, we excluded from the normative sample students who spoke another language as their first language if they did not also learn English from birth. The normal sample does include native English speakers who also spoke a second language; however, TILLS is not intended for use with students who are in the process of acquiring English as a second language, and such children were not included in the normative sample. As a result of this restriction, the overall numbers of Hispanic students represented in the normative sample (10%) is somewhat lower than their representation in the population (16%; Humes, Jones, & Ramirez, 2011).Were bilingual students included in your samples?

Bilingual language learners were included in the TILLS standardization sample only if they had been learning English since birth as one of their languages (i.e., dual language learners were included in the sample). However, children who learned Spanish, for example, at home and then learned English later (i.e., sequential language learners) were specifically excluded from the standardization sample. The reason was, if such students had difficulty on TILLS, it would be impossible to know whether it was due to language disorder or being in the process of second language learning. TILLS is validated for three purposes with children and adolescents who have been learning English since birth. The first purpose is to identify language/literacy disorder. TILLS is not valid for this purpose if the student being tested is learning English as a second language. It could be used, however, for the second and third purposes with such students. The second purpose is to document patterns of strengths and weaknesses, and the third is tracking change over time relative to the student’s prior performance on TILLS.

Why are the norms for students ages 6 and 7 years given in 6-month increments?

We examined the normative data during the course of data collection and determined that the youngest students’ scores showed noteworthy developmental changes across 6-month intervals at these ages. For example, 6-year-old students who were younger than 6 years, 6 months of age tended to have lower raw scores on certain subtests than students who were 6 years, 6 months of age or older. Therefore, separate norms were developed for students at 6-month intervals to better reflect developmental changes from 6 through 7 years of age.

Why weren’t students with speech impairments excluded from the norms? The presence of only speech articulation errors would not be expected to affect language and literacy skills (Catts, 1993; Nathan, Stackhouse, Goulandris, & Snowling, 2004). Our data confirmed that the children with articulation-only Impairments performed similarly to those in the normal language group. They were added to the normative group based on this evidence. It is important to note, however, that speech impairments often co-occur with language impairments. Students who had both speech and language impairments were excluded from the normative sample. These children were included in the sample of students with language and learning disabilities used to determine test sensitivity and specificity.

Does the fact that the normative sample contains students from different ethnic and racial backgrounds ensure that the test is unbiased?

The mere presence of a diverse normative group does not ensure that a test is unbiased. Bias must be evaluated statistically, as we did with TILLS.Why is it important to know how the test was developed?

Rigorous testing and culling of items during development promotes the development of a final version of a test that has strong reliability and validity. Sometimes test items that appear to have good content validity based on expert review have less obvious characteristics that create problems for the accurate measurement of a student’s skill level. Objective evaluation of these characteristics is critical to evidence-based test development.Does it really matter how the examiners who collected the normative data were trained?

Training was essential to ensure that TILLS normative data were collected in a standard way. This provided data that represent a stable base of comparison for clinicians who are now using the test to diagnose disorders.The TILLS manuals emphasize the use of specific cut scores. Why is this important?

Validity information supporting identification no longer applies if the correct cut score is not used to determine the presence or absence of a disorder. Using a higher score than the published cut score will increase the rate of over identification of typically developing students as having disorders. Using a lower score than the published cut score will result in under identification of students who truly have a disorder.Other tests report group differences as evidence that the test can identify disorders.Why does the TILLS present sensitivity and specificity data instead?

Statistical analyses of differences (e.g., t-tests, ANOVA results) can reveal significant group differences even when there is a relatively high proportion of overlap between the scores of typically developing students and those with disorders. The statistical procedure used to derive sensitivity and specificity data is more rigorous because it considers the classification of the individual students instead of the group as a whole. This provides the clinician with much stronger information concerning how accurate a diagnosis is likely to be (when the correct cut score is used) than group difference data can provide.If the TILLS scores show age-related change, why doesn’t TILLS report age (or grade) scores?

Although age scores appear to communicate that a student is functioning like typically developing children of a certain age (or at a certain grade level), this is actually not the case. Often there is too much overlap among the scores from typically developing children of different ages to assign a particular score to a particular age. This can be appreciated by noting the overlapping range of scores obtained at different ages for TILLS subtests in Figure 2.4 below. This overlap not only occurs for TILLS but for most, if not all, tests that measure skills that develop during childhood. Calculation of age scores necessarily obscures this overlap. As a result, age and grade scores can mislead parents, educators, and the clinician into thinking that a child is showing scores that are either more advanced or more impaired than they truly are. For this reason, age and grade scores have been widely criticized as indefensible on mathematical and conceptual grounds, and therefore, they are not used with the TILLS.Table 2.4 Identification rate for subgroups of children with language and literacy disorders

| Previous diagnosis | Identification rate |

|---|---|

| Language disorders: Spoken or spoken plus written modalities | 88% |

| Language disorders: Written modality only | 83% |

Should I be worried about the fact that students in diverse racial/ethnic groups had average scores that were 1 point lower than the normative mean on some subtests?

Standard score differences of 1 point are less than the standard error of measure, and they are not of practical importance in terms of interpreting TILLS results.Do the data presented mean that it is safe to use the TILLS with students from all diverse racial/ethnic groups?

Although the data provided suggest TILLS does a good job controlling sources of bias for the types of children for whom the test is intended (native English speakers from a range of racial, ethnic, and SES backgrounds), there are a few groups of children for whom TILLS is not valid. Children and adolescents who are not native English speakers and those whose cultural backgrounds and experience diverge significantly from students who were included in the normative sample should not be assessed with TILLS.Why isn’t there an adjusted cut score for students from low SES backgrounds who are 6–7 years of age?

Our data indicated that adjusting the cut score for 6-and 7-year-olds was not needed. Tryouts of adjusted cut scores reduced sensitivity without improvements in specificity. This indicated that there was no advantage to using an adjusted cut score at this age.What is Tele-TILLS?

Tele-TILLS™ allows examiners to test students’ oral and written language skills by delivering the TILLS via a video conferencing platform. Tele-TILLS facilitates remote assessment for identifying the presence of a language/literacy disorder.

Who can administer Tele-TILLS?

Tele-TILLS examiners must own a copy of the TILLS Examiner’s Kit and have experience in administering the TILLS using traditional face-to-face methods.

What are the components of Tele-TILLS?

Digital Tele-TILLS Stimulus Book. This audio-enhanced stimulus book contains the stimuli needed to administer the TILLS subtests virtually.

Tele-TILLS instructions. A PDF of clear guidelines for administering TILLS using distance technology, including technical details, materials needed, step-by-step examiner instructions, and tips on scoring and interpreting results.

Facilitator instructions. A handy, one-page quick guide for parents and other adult facilitators who are assisting with technology setup and troubleshooting during virtual administration of TILLS.

What else do I need to deliver the Tele-TILLS?

To deliver Tele-TILLS, examiners will need paper copies of the Examiner Record Form and the Student Response Form. The Student Response Form should be delivered to the location of the student prior to testing. Both the examiner and the student must have a computer with a camera, as well as a strong Internet connection. A second camera (for instance, a smartphone camera) is recommended as possible.

How long does it take to administer the Tele-TILLS?

Comprehensive assessment with TILLS or Tele-TILLS can typically be administered in just 90 minutes or less.

Can I use the TILLS Easy-Score™ with Tele-TILLS?

Yes. TILLS Easy-Score™ is your electronic scoring solution for TILLS and Tele-TILLS. Easy-Score saves you time by automating steps to complete the Examiner Record Form’s Scoring Chart and Identification Chart.

Can I trust Tele-TILLS results?

Yes. The TILLS developers conducted a study between April and September of 2020 to gather the scientific evidence to determine if the test can be administered using distance technology without affecting its validity for its primary purpose of identifying the presence of a language/literacy disorder. The results of the study do support the validity of administering TILLS remotely for this purpose.

How many copies of Tele-TILLS do I need?

One copy of Tele-TILLS should be purchased for each TILLS Examiner’s Kit.

How do I use Tele-TILLS with TILLS?

TILLS should be administered when using traditional face-to-face methods. Tele-TILLS should be administered when the assessment must occur via a video conferencing platform.

How can I get Tele-TILLS?

Existing TILLS users who own a copy of the standard TILLS Examiner’s Kit are eligible to purchase Tele-TILLS. Access Tele-TILLS.

As the developers of TILLS, we want to assure you that we’re aware of the concerns about the Tommy the Trickster Story Retelling subtest. To those who expressed concern about this story’s thematic content (specifically the aspect of weight gain): We understand your concerns, and we want to thank you for offering this important feedback.

Will the Tommy the Trickster story be replaced?

Yes. We’ve heard your concerns about the content of this story, and there will definitely be a new story for younger-age students in TILLS-2, which is in the early stages of development. The Tommy the Trickster story will not be used in any future editions of TILLS.

Can you offer a replacement story now?

It’s not possible to create a replacement version of the story for the current edition of TILLS—not because we disagree with the feedback, but because of psychometrics. In order for TILLS data to be interpretable, all the subtests must be co-normed on the same standardization group. It wouldn’t be possible to create a new story and have it co-normed with all the other original data.

Why can’t I just change a few of the words in the story?

Some TILLS users have reported using creative workarounds for the Tommy the Trickster story. The TILLS developers emphasize that the test's validity and accuracy rests on using the language in the subtests exactly as is—the validity of the test will be affected when language is changed. The strong psychometric properties of TILLS are unparalleled and set this test apart in the field, which is why we can’t offer an alternate story now or endorse making changes to the test.

So what can I do for now, if I’m uncomfortable using this story?

You have the option to omit the story if you feel this could be an issue for a particular child, or decide not to use the story at all with any 6- to 11-year-olds.

What will happen to standardized scoring if I omit this Story Retelling subtest?

You can still calculate the three age-dependent Identification Core Scores, but you will be unable to calculate the sentence/discourse composite score. You will also be unable to give Delayed Story Retelling as a measure of verbal memory.

What will happen to standardized scoring if I alter the story myself?

Any changes you make to a standardized test like TILLS will affect your ability to compare your results to the standardization data.

Why was this story used in the first place?

The Tommy the Trickster story was not meant to focus on Tommy’s weight, but on his cleverness in talking his friends into trading their cookies for his healthy foods. The entire test was reviewed by experts in our field at the time of test development in the early 2000s, we had nearly 100 test administrators across the country gathering data during item tryouts and standardization testing, and issues with the story were not raised during this time. But we recognize that with the cultural shift toward body positivity, it’s especially important to be aware of the messaging our students may internalize and make adjustments accordingly. We hear you, we agree with you, and that’s why we’ll be replacing this story in TILLS-2.

Please feel free to contact us if you have any further questions, or if you'd like to help with the tryouts or standardization of the future second edition. Being involved with the development is a great chance to provide constructive feedback to the TILLS research team!